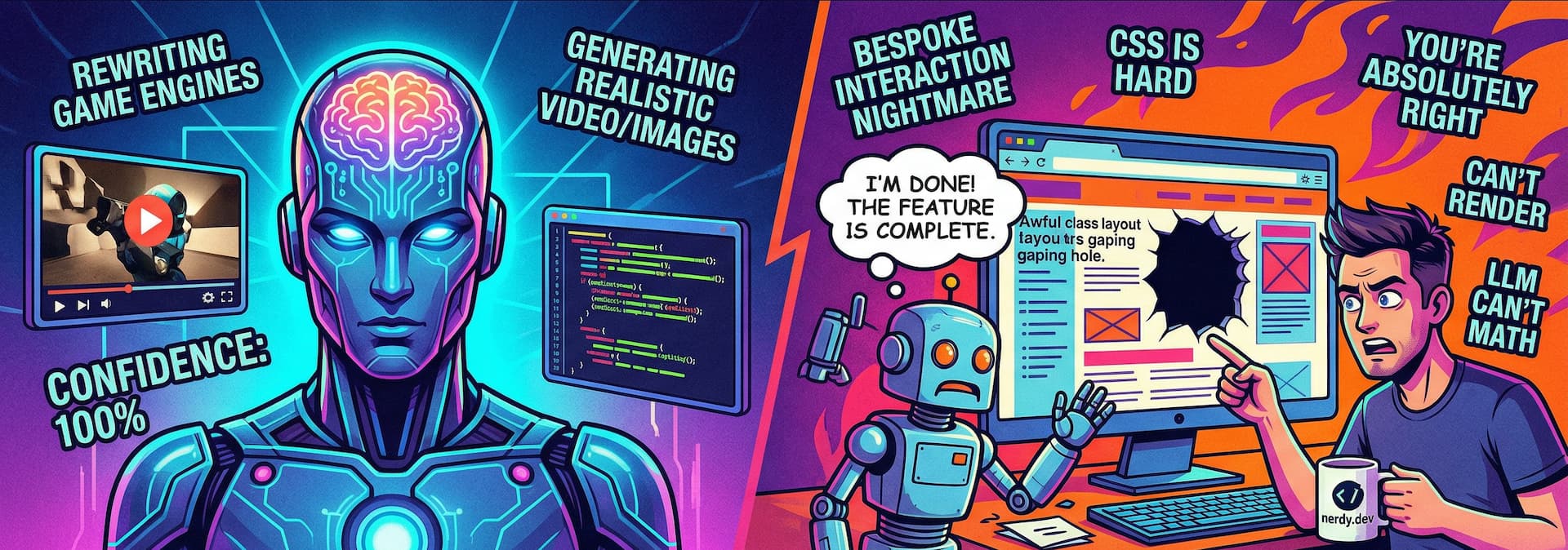

AI is a sycophantic dev wannabe that skimmed a shitload of tutorials. You get the results of a probabilistic guess based on patterns it saw during training. What did it train on? Ancient solutions, unoriginal UI patterns, and watered down junk.

I'm about to rant about how this is both useful and lame.

The Good #

AI loves the boring stuff. It thrives on mediocrity.

If you want some gloriously unoriginal UI, it has your back 😜

- Scaffolding: Generic regurgitation of patterns it's seen, done.

- Tokens: Migrating tokens or mapping them out? It eats this tedious garbage for breakfast.

- Outlining features: Generic lists ✅

- Lying to your face: Confident hot garbage on a silver platter. It'll hand you a snippet, dust off its digital hands, and tell you it finished the work. It did not finish the work.

Aka: If it's a well-worn pattern, AI is there to help you copy-paste faster. Which, for a lot of programming, is totally the case. I'm genuinely finding a lot of helpful stuff in this department.

The Bad #

Pixel perfection & bespoke solutions… what are those?

The exact second you step off the paved road of unoriginality, it faceplants.

- Bespoke solutions & custom interactions: Try asking it for some scroll-driven animations or custom micro-interactions. It will invent a CSS syntax that hasn't existed since IE6.

- Layout & Spacing: Predicting intrinsic/extrinsic page properties? It's already bad at math, how could it get this rediculously dynamic calculation correct. Spacing? Ha, seems reasonably to expect symmetry, but it's terrible at the math.

- Combined states: Pinpointing where to edit a complex component state makes it cry.

- Accessibility: It throws

aria-hidden="true"at a wall and hopes it sticks. - Performance: It will give you the heaviest, jankiest solution unless you explicitly ask it to be for a specific (apparently "indie") performance solution.

- Tests: Writing good tests? Good, no. A lot, yes.

And the absolute best part? The more complex the component gets, the slower and dumber the front-end help becomes. Incredible how it can one shot a totally decent front-end design or component, than choke on a follow up request. Speaks to what it's good at.

Why? #

1. It trained on ancient garbage #

It lacks modern training data.

It has an excessive reliance on standard templates because that's what the internet is full of. Modern CSS? It's barely aware of it.

2. It literally cannot see #

It's an LLM, not a rendering engine!

It's notoriously bad at math, and throwing screenshots at it means very little. It's stabbing in the dark.

This leads to the classic UI interaction:

AI: "I'm done! Here is your perfectly crafted UI."

Me: "There's a gaping hole where the icon should be, fix the missing icon."

AI: "You're absolutely right. Let me fix that for you."

3. It does not know WHY we do things #

It doesn't understand the "why" behind our architectural decisions.

SDD, BDD, or state machines might help guide it, but the models weren't exactly trained on those paired with stellar solutions.

We're asking a giant text-predictor to make new connections on the fly. We can get it there, but there's so much to consider we have to spell it out before it starts making the connections we want.

4. Zero environmental control #

It doesn't control where the code lives.

It can write annoyingly amazing Rust, TypeScript or Python, but those have the distinct advantage of a predictable (pinnable!!! like v14.4) environment the code executes in.

That's not how HTML or CSS work, there is no pinning the browser type, browser window size, browser version, the users input type (keyboard, mouse, touch, voice), their user preferences, etc. That's complex end environment shit.

The list goes on too, for scenarios, contexts and variables the rendering engine juggles before resolving the final output. The LLM doesn't control these, so it ignores them until you make them relevant.

Even prompting in logical properties, you have to ask for this kind of CSS. These should be CSS tablestakes output from LLMs, but it's not. And even when you ask for it, or provide documentation that spells it out, it's not guaranteed to work.

The place where HTML and CSS have to render is chaotic. It's a browser, with a million different versions, a million different ways to render, a million different ways to interact with it, and a million different ways to break it.

It's a moving target, and LLMs are terrible at moving targets.

Damnit humans #

We're a LLM combinatorial explosion.

We're wildly unpredictable targets. We change our minds, we switch viewports, we change theme preferences, we changes devices, we change browsers, we change browser versions, we switch inputs, we change our everything.

We're not a static target. We're not a pattern that can be learned.

There is a "human mainstream" of behaviors, preferences, and expectations where LLMs can be genuinely helpful; but our "full potential" matrix will be exploding LLM output patterns for a long time to come. IMO at least.

unless we Borg.